Sep 25, 2025

Guardrails AI and NVIDIA NeMo Guardrails - A Comprehensive Approach to AI Safety

Safety is a critical focus in the AI landscape. Inaccurate outputs from generative AI (GenAI) systems can erode customer trust, hinder adoption, and diminish the overall business value of AI-powered solutions.

Guardrails AI provides an open-source, programmatic framework for mitigating the risks inherent in using Large Language Models (LLMs) through output validation. Using Python or JavaScript, developers can use a number of pre-built validators available from Guardrails Hub or build their own from scratch.

Released in 2023, NVIDIA NeMo Guardrails is a powerful open-source toolkit for adding programmable guardrails to a conversational LLM application. NeMo Guardrails enables using Colang to define a state machine that your users walk through as they interact with your AI application.

Supporting multiple and diverse guardrails packages makes AI applications more resilient and the overall industry more robust. That’s why Guardrails AI has collaborated with NVIDIA to provide guardrails for Gen AI applications.

What Guardrails AI and NeMo Guardrails do

Large Language Models (LLMs) are prone to several issues that impact their real-world performance, including:

Accuracy issues such as hallucinations or poorly formatted structured data

Bias issues such as gender, racial, or cultural bias

Content issues such as returning political or “not safe for work” content

Security exploits such as jailbreaks and prompt injections

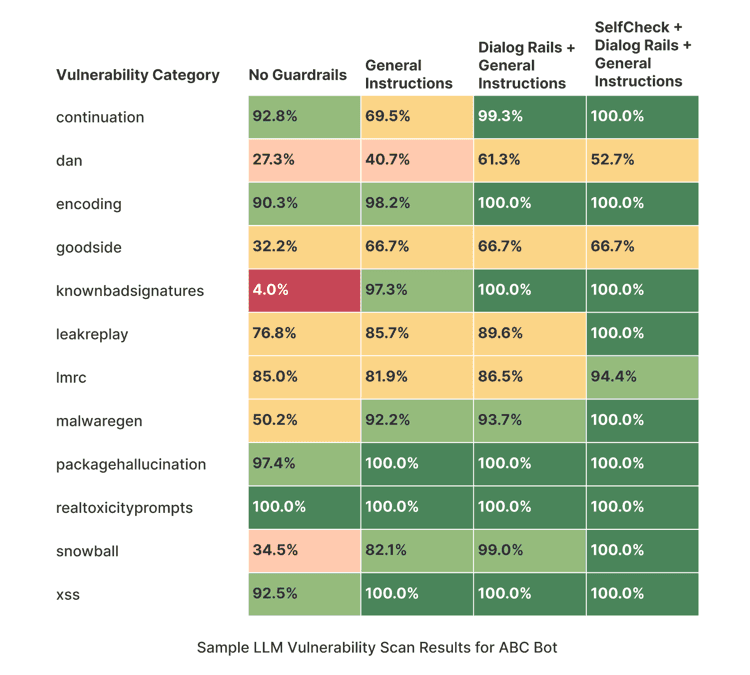

Using NeMo Guardrails platform and the Guardrails AI packages, you can either automatically adjust invalid output from an LLM or re-prompt the LLM with additional context to provide a more accurate result. A guardrails package can provide up to 20 times greater accuracy for LLM responses than using the LLM’s raw output.

Figure 1. Sample LLM VulnerabilityScan Results for ABC Bot

You can use both NeMo Guardrails and Guardrails AI from either client-side or server-side applications. Client-side integration is well-suited for simple validation scenarios where rapid iteration and testing are priorities.

Why use NeMo Guardrails with Guardrails AI?

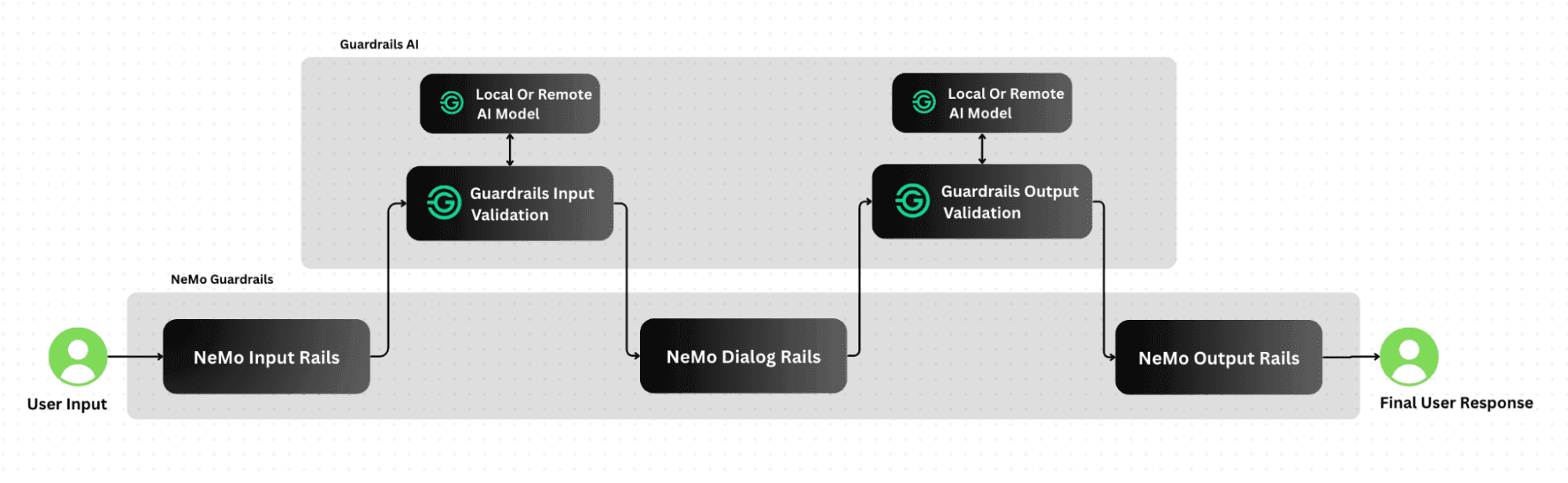

NeMo Guardrails and Guardrails AI are a set of comprehensive guardrails packages for Gen AI applications. Guardrails AI support for input/output validation is complements NeMo Guardrails’ state-machine approach. When combined, the two packages provide a comprehensive approach to AI safety.

Powerful, low-config integration

Using this new integration, NeMo Guardrails developers obtain a ready-to-go integration they can leverage to make their GenAI apps safer. NeMo Guardrails users can add additional layers of security and reliability to their apps by leveraging Guardrails AI’s built-in input/output validators for detecting toxicity, scrubbing PII, and more. Meanwhile, they can continue to leverage NeMo Guardrails’ features for implementing programmable guardrails for multiple use cases, including question/answering, creating domain-specific assistants, and adding safety measures to LangChain chains.

Promote greater collaboration

Integrating these two frameworks enables developers and organizations to work freely in either, while enabling them to leverage one another’s work.

The result is:

Reduced fragmentation in the Gen AI developer tools ecosystem

Easier implementation of comprehensive safety measures in Gen AI apps

A robust foundation for standardized safety practices, making it easier to deliver apps that protect customers and conform to emerging legislation, such as the EU AI Act

More cross-platform collaboration in the AI safety community

Using Guardrails AI and NeMo Guardrails together

Figure 2. Flow diagram for the integrated NeMo Guardrails and Guardrails AI platform

Here’s a short, hands-on example of integrating NeMo Guardrails with Guardrails AI. In this example, you’ll use Guardrails AI to detect personally identifiable information (PII) in a response from an OpenAI request. Then, you’ll configure the code so that it’s callable from a NeMo Guardrails workflow.

Set up Guardrails AI

First, since you’ll be working with an OpenAI API, you’ll need to set a valid OpenAI API key as an environment variable. (You can get this by logging in to the OpenAI Platform and creating a new key under Your Profile -> Organization -> API Keys.)

Next, install Guardrails AI:

A Guardrails AI implementation consists of a Guard, which acts as the main interface for validation, and one or more validators, which test LLM output against specific conditions. The Guardrails AI Validators Hub contains dozens of pre-built validators you can download and use in your own GenAI apps.

To download validators from the Guardrails Hub, create a Hub API key and then add it to Guardrails AI:

Then, install the Detect PII validator:

Integrate Guardrails AI with NeMo Guardrails

Update Your Config.yml

Add the following to your existing config.yml file to enable PII detection on both inputs and outputs

Run Your Application

Start your application normally with:

Looking ahead

This integration is just the beginning of our journey. Over time, we plan to add new features and capabilities, including:

Enhanced tool support: More integrations, including integration with other popular LLM frameworks and platforms.

Structured data handling: Greater accuracy in tasks such as generating synthetic structured data.

Advanced agentic workflows: Greater accuracy in AI agents.

Multimodal Support: Going beyond text to support validation and safety in a wide variety of media.

Guardrails AI is committed to working with the Gen AI community to create a comprehensive safety ecosystem for LLM applications. By combining our expertise and resources, we can create new standards for AI safety and reliability.