AI Guardrails Index: Hallucination

We broke AI guardrails down to six categories. We curated datasets and models that demonstrate the state of AI safety using LLMs and other open source models.

Introduction

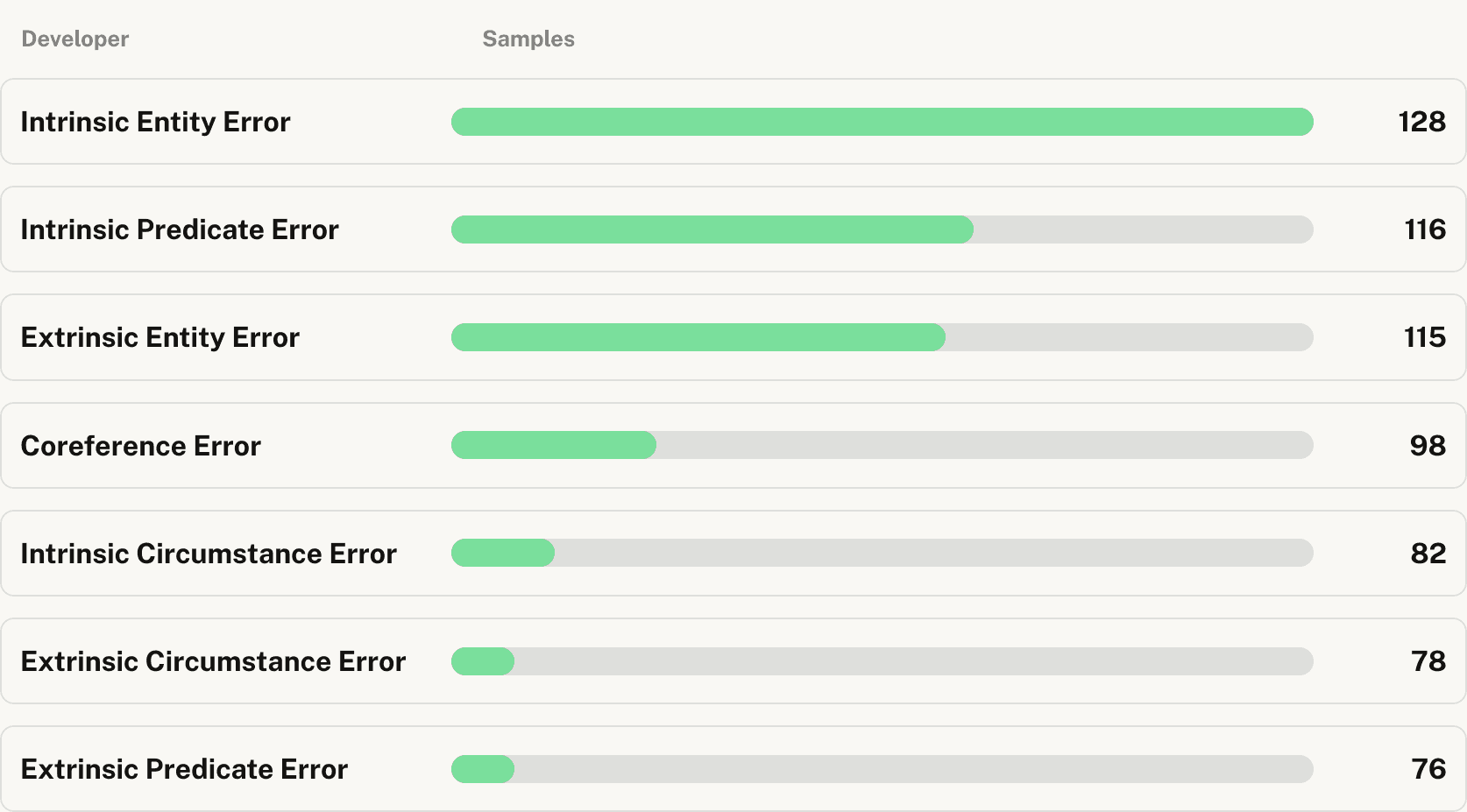

AI hallucinations is a well known and widely discussed risk in real-world applications. To mitigate these, implementing guardrails is essential, even with context-rich prompts and RAG systems. Our benchmark offers a comprehensive evaluation of leading guardrail solutions, using rigorous methodology and high-quality datasets representing diverse scenarios and hallucination types.

Conclusion

Guardrails AI's provenance-llm and minicheck emerge as top performers in hallucination detection, outshining competitors across most categories. Guardrails AI Provenance-llm excels in accuracy, particularly for intrinsic entity errors, making it ideal for high-stakes applications in finance, legal, and healthcare. Guardrails AI Minicheck offers a balanced approach, combining good accuracy with faster processing, suitable for real-time applications like chatbots. GCP and Azure lag in accuracy but may fit less critical scenarios or where cloud integration is key. Vectara shows variable performance, potentially useful for specific use cases. Organizations must carefully weigh accuracy, speed, and error type importance based on their specific requirements and risk profiles along with other infrastructure requirements. Ultimately, selecting the right model is crucial for safeguarding AI-driven services and maintaining user trust across various industries and applications.

Leaderboard

Loading...

Loading...